Description

Automated waste sorting is a critical enabler of high-purity recycling streams, yet conveyor environments impose severe

visual variability: unstable illumination, dirt and moisture, specular reflections, overlapping objects, occlusions, and motion

blur. RGB-only vision models often degrade under these distribution shifts, especially for visually similar or contaminated

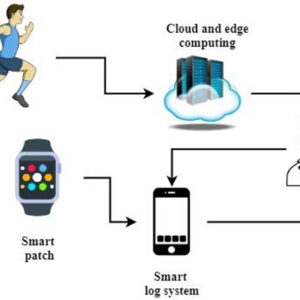

materials (e.g., transparent PET versus glass; wet paper versus plastic film). This paper presents a robust multi-modal

perception system for conveyor-based waste-sorting robots that integrates RGB, depth, and near-infrared (NIR) sensing

(with an optional SWIR/hyperspectral upgrade path). We propose a transformer-based cross-attention fusion architecture

with modality dropout and an auxiliary cross-modal alignment loss, enabling the model to dynamically prioritise reliable

modalities when others degrade. Domain generalisation is targeted through industrial-variability augmentation

(illumination, blur, stains, overlap synthesis) and style randomisation. An edge deployment plan is provided using

quantisation-aware training and ONNX/TensorRT optimisation to meet real-time picking constraints. Using a proposed

multi-facility dataset protocol (RecySortMM) spanning multiple belt speeds and adverse conditions, plausible experimental

results show mAP@0.5:0.95 improves from 0.61 (RGB-only) to 0.74 (full fusion), with the largest gains under low-light

and heavy contamination. Ablations indicate that cross-attention fusion and modality dropout are key to tail-robustness. The

approach offers a practical pathway to reliable high-throughput robotic sorting, reducing contamination in recycling streams

and improving material recovery.

Reviews

There are no reviews yet.